- Keep Cool

- Posts

- Bring Your Own Power (BYOP)

Bring Your Own Power (BYOP)

A deep dive on how data center developers plan to power their projects

Hi there,

This piece was contributed by Tommy Hughes, with editorial overlay from yours truly. Tommy works on strategic accounts at PowerFlex, where he focuses on scaling distributed energy solutions. More of his writing can be found on his Substack.

DEEP DIVE

I have spent the better part of the past year silently tracing a changing digital supply chain across developers, utility executives, county planners, environmental advocates, and a few random conversations with residents who attend zoning meetings to monitor industrial expansion because they’re bored. The prevailing sentiment is not one of shared progress, but of rapid, opaque transformation. We are arguably witnessing the fastest buildout of industrial infrastructure ever, arriving in the form of data centers: windowless fortresses of steel that consume staggering (slightly unfathomable) amounts of electricity, water, and land. This growth is fundamentally rewriting the assumptions that have governed the power sector for decades.

Industry once treated electricity as a dependable, invisible input, someone else’s problem. Today, it, and many of the essential components of producing it, like gas turbines, has and have become the central constraint. Interconnection queues stretch into the 2030s, substations are scarce, and transformer lead times are measured in years rather than months or weeks. Cleanview’s recent analysis of 46 behind-the-meter data center projects, representing 56 gigawatts of planned capacity, indicates that roughly 30% of all planned U.S. data center capacity is now moving toward self-supplied power. Notably, 90% of that capacity was announced in 2025 alone. A niche workaround has become a core strategy. Developers are bringing their own power, not as a temporary backup, but as one option to deliver the foundational reliability and power requirements for project viability. This is the "on-site power arms race," and it is an important frame, beyond all the buzz about AI itself, through which to view all attendant discussions concerning AI, data centers, and all they may or may not enable in coming years.

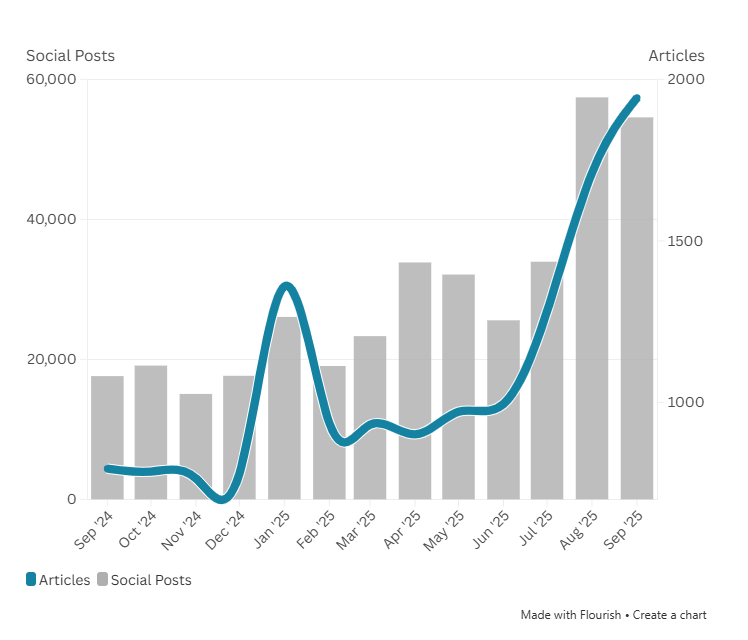

“Data Center” Mentions in media, original report from INK Media

1. How time-to-power shapes data center development

In technical discussions, the focus vis-à-vis data center development has shifted almost entirely to a single data point: the energization date. For developers, this date is the primary bottleneck in their project timelines. The shift is driven by fundamental math related to running and iteratively advancing AI. Data centers accounted for around 4% of total U.S. electricity demand in 2023. That figure is projected to grow to 9% by 2030. Data centers are expected to account for 15–20% of all U.S. load growth over the next decade. Furthermore, Graphics Processing Units (GPUs) used for generative AI are estimated to be 10 to 15 times more electricity-intensive than the CPUs used in traditional computing. Hence, in part, how Nvidia, the most prominent producer of GPUs, managed to reap $68 billion in revenues in its most recent quarter, with roughly $62 billion thereof attributable to its data center segment.

The current U.S. grid, which accommodated relatively consistent electricity production, transmission, and distribution for decades, isn’t up to the demands of the growth pattern, let alone on the desired schedule that developers require. Indeed, even before the current infrastructure and AI boom, the grid was already overburdened. Interconnection risk has become a permanent feature of the development landscape, whether for data centers or otherwise, and schedule certainty is increasingly rare. Grid Strategies estimates a 16% surge in power demand over just four years, reaching 90 GW by 2029, while Goldman Sachs estimates data center power demand will grow 160% by 2030.

Recent project‑level data shows how unreliable nominal commissioning dates have become. Sightline Climate’s Q1 2026 Outlook notes that of 110 data center projects slated to come online last year, 26% are delayed, and another 10% quietly shifted their commercial operation dates amid power, permitting, and construction constraints. Looking ahead, at least 16 GW of capacity is nominally scheduled to come online in 2026 across 140 projects, but only 5 GW is actually under construction. Sightline expects 30–50% of those 2026 projects to be delayed, with roughly 11 GW still stuck at the “announced” stage despite typical build times of 12-18 months. Time‑to‑power is now the central development constraint, not an afterthought that can be mortgaged for utilities to figure out later and on their own.

Cleanview’s aforementioned research details how developers are responding. They are procuring whatever generation equipment is available: refurbished industrial turbines, aeroderivative turbines designed for aircraft, reciprocating engines, and mobile gas generators mounted on semi-trucks (or at least, they are working on making these alternatives feasible and integratable). One developer recently placed a $1.25 billion order with Boom Supersonic, a company that has never previously sold a power generation product, simply to secure the turbines the company says it will be able to make. The quest for the perfect energy source has been replaced by a more desperate “anything that hums” policy.

Of course, the stakes are high. Not just because of competition and the race to build ever more powerful models. Current estimates suggest that AI data centers can generate between $10 million and $12 million in revenue per megawatt of capacity annually. On a gigawatt scale, that represents $10 billion to $12 billion a year. Bringing a campus online even two years ahead of a grid connection can thus yield tens of billions in additional revenue. Consequently, developers are willing to overlook everything from high fuel costs to sophisticated, novel engineering challenges, lower efficiencies, and, lest we forget, little regard for the potential to spike greenhouse gas emissions, to secure power as fast as physically possible.

2. The anatomy of modern data centers

The following “stacks” represent the hardware currently being deployed to these ends. Traditionally, data centers rely on a 2N redundancy scheme (meaning the facility has two completely independent power systems so that if one fails entirely, the other is not only dispatchable, but already running and capable of handling the full load on its own). The “BYOP” era elevates what were once backup components of setups to primary use.

Stack 1: Diesel gensets

A diesel genset is a coupled package of a compression ignition engine and an alternator that produces AC power on demand. In a traditional data center architecture, these units sit downstream of the utility feed as the last resort source, synchronized via paralleling switchgear to share the load during a grid failure. In dense corridors like Northern Virginia, the expected operating envelope is changing, with approximately 4,000 generators now permitted to run up to 500 hours per year for testing and reliability events. During an outage, the critical IT load rides on uninterruptible power supply (UPS) batteries for several minutes while the generators start, reach rated speed, and stabilize voltage. Once stabilized, automatic transfer switches move the load to the generator-backed buswork to maintain continuous uptime. However, this concentration creates significant localized air quality issues, as diesel exhaust contains nitrogen oxides, benzene, and arsenic. A single 1.3 million-square-foot facility in Wisconsin, for example, is permitted to emit nearly 90,000 tons of CO2e, primarily from these testing and emergency cycles. Consequently, while diesel remains the industry standard for reliability, its cumulative environmental footprint is increasingly coming under regulatory scrutiny.

More traditional, “standby” diesel generators for data centers (Central States Diesel Generators)

Stack 2: Gas microgrids

A gas microgrid is a behind-the-meter power plant composed of multiple natural gas prime movers, such as reciprocating engines or aeroderivative turbines, orchestrated by a sophisticated microgrid controller. This controller acts as the system's brain, managing voltage and frequency locally to enable islanding, where the data center operates independently of the utility grid. Cleanview’s research indicates that roughly three-quarters of the identified behind-the-meter equipment, totaling 23 GW, is now natural-gas-powered. Developers favor this stack because gas infrastructure is often more readily available than high-voltage grid interconnections, offering a more predictable energization timeline. Mechanically, these systems use modular engine blocks that can be scaled in increments of capital expenditure as the data center's power demand grows. While these microgrids offer a plausible path to rapid deployment, they tether the digital economy to fossil-fuel infrastructure in the long term. The trade-off for schedule certainty is a complex web of air permitting and pipeline constraints that can become a secondary bottleneck.

Stack 3: Private gas plants

For the largest hyperscale campuses, developers are bypassing modular solutions in favor of utility-scale, on-site gas-fired power plants. These facilities often use heavy-duty gas turbines or combined-cycle configurations to deliver hundreds of megawatts of dedicated baseload power. By building their own behind-the-meter plants, developers reduce the financial risk associated with multi-year utility interconnection delays. These projects are most prevalent in regions with high natural gas production and regulatory environments that favor rapid industrial development. However, these plants are land-intensive and require significant infrastructure, including dedicated high-pressure gas pipelines and cooling water systems. They also require rigorous permitting for noise and air emissions, as they essentially function as private utilities. While they provide the ultimate level of power independence, they represent a massive, long-term commitment to carbon-intensive generation.

Stack 4: Fuel cells

Fuel cells generate electricity through an electrochemical reaction between a fuel, typically natural gas or hydrogen, and an oxidant, rather than through combustion. In current data center deployments, these systems usually utilize Solid Oxide Fuel Cell technology, which can run on the existing natural gas grid while being hydrogen-ready for the future. They offer a quieter profile and a cleaner local plume compared to reciprocating engines, making them easier to permit in noise-sensitive areas. However, their current economic viability depends almost entirely on natural gas, meaning they are not yet a truly carbon-free solution. The modular nature of fuel cell energy servers allows developers to add capacity in small increments, matching the phased build-out of server halls. Despite the forward-looking narrative of a hydrogen economy, the high capital costs and reliance on fossil fuels remain significant hurdles. For now, they serve as a premium, lower-emission bridge for developers facing intense community or regulatory pressure.

Stack 5: Grid interfacing batteries and UPS

The Uninterruptible Power Supply (UPS) is the critical link in a data center’s electrical chain, traditionally providing about 5 minutes of ride-through time using lead-acid or lithium-ion batteries. In the “BYOP” model, these systems are evolving into larger grid-interfacing battery energy storage systems capable of handling more than just emergency transitions. They allow facilities to perform peak shaving, reducing the load on on-site generators or the grid during periods of high demand. They also provide essential frequency regulation and voltage support, smoothing out the volatility inherent in islanded microgrid operations. By maintaining complete uptime reliability, they ensure that sensitive IT equipment experiences no power loss for a single millisecond. As data centers move toward hybrid power, these batteries become a key tool to integrate intermittent renewables with firm on-site generation. They transform the data center from a passive consumer into a flexible, responsive node in larger systems.

Stack 6: Hybrid campuses

Hybrid campuses represent the most complex tier of on-site power, integrating solar arrays, battery storage, and firm fuel generation into a single microgrid. A flagship example is Switch’s Citadel Campus in Nevada, which utilizes 127 MW of solar and 240 MWh of storage to serve the data center directly. These projects are designed to maintain some level of responsiveness to corporate sustainability goals, which have largely taken a backseat to building data centers and powering them at all costs in general, while preserving the reliability required for AI workloads. The technical challenge lies in “firming” the intermittent renewables, which requires sophisticated software to balance solar output with dispatchable gas engines or fuel cells. While these steps can help assuage community skepticism and reduce carbon footprints, they are exceptionally land-intensive and require massive upfront capital.

The physics of 24/7 uptime means that even the most advanced hybrid sites cannot yet abandon firm, combustion-based generation entirely. They serve as a proof of concept for a less gas-dependent data center buildout. These hybrid architectures are also where the largest projects live. Sightline’s data shows that while on‑site and hybrid campuses make up less than 10% of projects by count, they account for nearly half(!) of total announced capacity. Flagship sites like a 7‑GW Lea County campus in New Mexico and a 1.8‑GW gas‑plus‑renewables project in Wyoming are far too large to wait for conventional grid build‑outs; they are effectively building their own power systems first and then hanging compute off the side. Perhaps as a consequence of their complexity, they aren’t all that common yet in the current build-out.

Stack 7: PPAs and direct asset acquisition

Another recent evolution in power strategy is the shift from Power Purchase Agreements to the direct acquisition of generation assets. Traditionally, tech companies signed long-term contracts to buy power from third-party wind or solar farms to offset their dirtier grid consumption. However, as grid capacity has tightened, hyperscalers like Amazon have begun buying shovel-ready projects outright, such as the 1.2 GW Sunstone solar-plus-storage facility in Oregon. This move toward direct ownership allows companies to control the development timeline and ensure that the power is physically available when the data center opens. It signals a fundamental lack of confidence in traditional utilities' ability to deliver promised capacity on a competitive schedule. By owning the fuel and the plant, tech giants are effectively vertically integrating their energy supply chains. This strategy secures long term price stability and carbon-free energy, but it also places the burden of infrastructure management directly on the technology firm.

Soon, but not yet: Small modular reactors

Small Modular Reactors are envisioned as the ultimate solution for carbon-free, high-density baseload power for data center campuses. These reactors are designed to be factory-built and shipped to a site, offering a smaller footprint and, in theory, lower costs than traditional large-scale nuclear plants. However, the technology remains aspirational in the context of the current AI build-out, as no commercial SMRs are yet operational in the U.S. That may well change in the coming years as many startups tout monthly, if not weekly, progress towards demoing their first reactors; a cadre of startups hopes to take reactors critical for the first time before or on July 4th this year.

The cautionary tales of Vogtle Units 3 and 4, which took 15 years and 36 billion dollars to complete (running well over budget, some of the costs of which were passed along to ratepayers), loom large over the industry's nuclear ambitions. This motivates work on SMRs, which ideally, once commercialized, can be produced, deployed, and energized more quickly and with more predictable CAPEX requirements. Still, any semblance of steady SMR deployment will only start in 2027 or 2028 at best, which is already at the longer end of when developers want to develop, finish, and run data centers.

While tech companies are signing early-stage agreements to signal interest and spur development, the regulatory and technical hurdles mean that a vision of many dozens of SMRs dotting the American landscape is only realistically envisioned to start in the 2030s. And that would constitute a remarkable success. For a developer who needs power in 24 months, SMRs can’t be counted on in a construction Gantt chart.

Also ran: Resurrecting nuclear giants

The current operating nuclear strategy for some hyperscalers has also shifted from awaiting future innovation to resuscitating the industry’s vintage giants. This asset reclamation play prioritizes certainty over novelty, as developers realize that the fastest way to secure a gigawatt of carbon-free baseload power can be to tether data centers directly to proven, existing assets. The most significant signal of this shift was AWS acquiring 1.9 GW of nuclear power for its campus from Talen Energy, which is connected (via some complicated metering techniques to avoid approval scrutiny) to the Susquehanna Steam Electric Station in Pennsylvania. By plugging directly into this 1980s-era plant, AWS effectively bypasses the years-long queue for grid interconnection. Even more unprecedented is Microsoft’s 20-year PPA with Constellation Energy to restart Unit 1 at Three Mile Island, a 1974-vintage reactor that was retired in 2019. Ironically, for some tech titans, the path of least resistance to ever-more advanced AI runs through 50-year-old cooling towers.

3. Geographic constraints

The choice of power stack is also dictated by local constraints. Where diesel permitting is permissive, diesel is used. Where gas pipelines are accessible, gas microgrids are built. Cleanview’s data shows that 83% of proposed behind-the-meter capacity is concentrated in just five states. These regions typically offer a combination of available fuel and regulatory frameworks that speed rapid industrial development.

Virginia is a great example. Data centers already consume approximately 25% of Virginia’s electricity, the highest share of any state. The only place in the world with more data centers than Virginia is China at large (and mind you, that’s one state versus a country with 1.4 billion people). Dominion Energy’s 2023 Integrated Resource Plan projects a quadrupling of demand in 15 years, driven largely by data centers. A Virginia legislative audit (JLARC) found that unconstrained power demand will double in the next 10 years, a trend that’s irreconciliable with existing clean energy and climate goals.

Texas has seen an enormous boom in both wind and solar, yet it remains a primary site for gas-heavy data center development due to its independent grid and proximity to gas production. Other fast-growing states include South Carolina, Arizona, and North Dakota, where commercial electricity demand is growing fastest due to large computing facilities.

The rapid deployment of on-site power has direct consequences for the communities hosting this infrastructure. Data centers are material infrastructures; they don’t happen in a theoretical vacuum, and are part of extractive digital supply chains that depend on land, minerals, water, and energy.

Water consumption

Data centers are forecast to consume ~450 million gallons of water per day by 2030, up from 205 million in 2016. A typical facility uses between three and five million gallons per day, equivalent to the water needed for a city of 30,000 to 50,000 people. Despite this, a 2022 Uptime Institute survey found that only 39% of data center operators actually measure water usage.

The impact is often underestimated. In northern Holland, a Microsoft data center used 84 million liters of drinking water in 2021, four times higher than the initial projection of 12–20 million liters. Furthermore, roughly three-quarters of the water footprint occurs indirectly at electricity generation sites (cooling thermal power plants), meaning communities far from the data center are part of the water-impact geography. In Northern Virginia, the Occoquan Reservoir, which provides drinking water to 2 million residents, has seen alarming increases in sodium and other salt-related constituents linked to the expansion of impervious surfaces, such as data center roofs and parking lots.

It sounds like a lot, but in macroeconomic water terms, data centers also remain a relatively small slice of total consumption. U.S. agriculture withdraws on the order of 80–100 billion gallons of water per day. Even at 450 million gallons per day, projected data center usage would represent well under 1% of total U.S. freshwater withdrawals. This does not mean it doesn’t matter, particularly in water-stressed regions, but it certainly reframes the discussion: data center cooling is a concentrated, visible load, whereas agriculture is a diffuse and structurally embedded one. Growing alfalfa to feed cows with water from the Colorado River is a much bigger problem than any modal data center’s water consumption.

Land use and noise

Data centers are enormous buildings, often exceeding 100,000 square feet, that provide few long-term jobs relative to their footprint. They are a poor fit for walkable, transit-supportive mixed-use areas. In Chandler, Arizona, noise from cooling systems and backup generator testing led to revised ordinances in 2023 that imposed stricter standards. In Fairfax County, Virginia, new data centers are prohibited within one mile of metro stations to preserve transit-oriented development.

Legal battles are mounting across the country as data center proposals run into a familiar buzzsaw of local resistance, noise complaints, worries about property values and community character, and a growing willingness by counties and neighborhood groups to drag projects into hearings, permitting fights, and court. The politics are catching up to the physical reality: New York lawmakers have proposed a statewide moratorium on permitting for large (20+ MW) data centers while the state studies land use, pollution (explicitly including noise), and rate impacts, a sign that siting is becoming a front-end regulatory question rather than a back-end mitigation exercise. Public opinion is also more fragile than the industry may have originally assumed: a Heatmap Pro poll found only 44% of Americans would support a data center being built near where they live (42% oppose), with “local, tangible” downsides like water and electricity impacts proving especially persuasive, the classic ingredients for zoning boards, injunctions, and drawn-out permitting wars.

Even when national sentiment is “mildly positive,” backlash intensifies when a project becomes literal, a neighborhood-level fight over who absorbs the costs of the AI buildout and who captures the tax base. Washington’s emerging “BYONCE” posture, effectively telling developers to bring not just their own power, but their own new clean energy, underlines the same land-use tension: if the data center arrives, the enabling infrastructure (generation, transmission, substations) tends to arrive with it, expanding the project’s spatial and political footprint well beyond the server halls themselves. Mind you, all the opposition percolating out there is really no joke, and it’s mounting: at least 25 data center developments were canceled due to local opposition last year! Communities across the country are making it clear in hearings and court filings alike: whatever arrives with the data center had better be clean, quiet, and out of sight to the extent possible. If it could drive up electricity bills, strain local water supplies, or disrupt daily life, well, you’d be surprised at how hard local residents will work to thwart development. Especially if little effort is made to educate and ensure local economic development is in order for local taxpayers.

Environmental justice

New fossil fuel infrastructure is frequently sited in already overburdened communities. In Chesterfield County, Virginia, residents have faced 80 years of coal-plant pollution, and cancer rates are in the 90th percentile nationally. Adding new gas-fired plants to support data center loads exacerbates existing inequities. Moreover, 29% of Virginians reported forgoing basic goods like food or medicine to afford energy bills in 2023; Dominion Energy projects that bills may triple to finance the infrastructure driven by data center loads. That’s an unconscionable added burden to Virginians, sans any direct assurances that the trillions reaped by a relative few as AI booms will somehow trickle back to them.

5. Governance and corporate tactics

The data center industry is increasingly adopting tactics reminiscent of older extractive industries. This includes veiling corporate identity behind local subsidiaries and using Non-Disclosure Agreements (NDAs) to withhold environmental information.

In The Dalles, Oregon, Google planned a data center on land formerly occupied by an aluminum smelter, co-opting associated industrial water rights. When a local newspaper requested water usage data, the city sued the paper to block disclosure, with Google paying the city’s legal costs. After a year of litigation, the city settled and released the data. This pattern of "proprietary" environmental data is a recurring theme in data center governance.

Environmental impact and permitting processes are almost universally project-by-project. There is no standard requirement to evaluate the regional cumulative impacts of clustered data centers on grid stability, air quality, or water quantity. Local governments are often drawn to data centers as "cash cows" because they generate significant property tax revenue while requiring few municipal services, but this often ignores the long-term public burden of infrastructure upgrades.

6. Utility response: lead, follow, or be routed around

Utilities are currently being "routed around" by the largest developers. When Amazon or other hyperscalers buy gigawatt-scale power projects outright, it is a clear indication that they are losing confidence in utilities as reliable partners for timely delivery, though some of it is also a recognition that it is not entirely fair to utilities either for them to finance assets the most immediate financial benefits of which flow to a relatively select few, as opposed to, say, the general public in any given jurisdiction. This creates a two-tier system. In some regions, utilities and developers coordinate to integrate behind-the-meter assets into regional planning. In others, the system is fragmented, with developers building private, islanded infrastructure that operates independently of the public grid. This fragmentation makes long-term decarbonization more difficult, as it locks in gas infrastructure that may operate for decades, and bifurcates regulatory efforts, capital investment, data, and collaboration / “shared context.”.

However, some grid operators are responding with a more nuanced "conditional access" model. Sightline’s Q1 2026 Outlook notes that regions like SPP and PJM are now offering faster interconnections to data centers that bring their own generation or demand flexibility. By embedding grid-upgrade charges into large-load tariffs and offering non-firm service contracts, operators are effectively allowing developers to connect sooner if they can use on-site capacity to ride through grid curtailments. This represents a negotiated detour where data centers become quasi-utilities, responsible for both their own security of supply and a share of the grid’s broader stability. This trade-off, flexibility in exchange for speed, is becoming a primary middle path for managing AI-scale demand.

The net-net

The shift toward behind-the-meter power is a pragmatic response to a systemic failure to revitalize and upgrade the nation’s electrical grid to both keep pace with growing demand, AI boom or not, and to decarbonize at the requisite scope and scale. That developers are opting for BTM solutions is not a transition driven by a preference for self-sufficiency, per se, as much as by sheer logistical and economic necessity. That this buildout is currently slated to depend on natural gas and diesel in many cases is also a product of logistic and economic necessity (solar and batteries only get you so far), but still augers an indictment of corporate sustainability pledges from tech giants and elsewhere, adding to the long list of “green larping” examples enumerated in Keep Cool previously.

The on-site power arms race will create a massive fleet of fossil fuel generators, decoupled from the grid, powering data centers—a landscape of windowless, private fortresses of power production and the incessant whirr of ever faster, more complex, and increasingly even recursive computation—that will drive additional greenhouse gas emissions for decades. Its decoupling allows for rapid growth, yes, but it also continues to externalize the environmental and social costs onto local communities and the world at large while bypassing the traditional regulatory oversight that governs public utilities. A lot of what’s been discussed here is novel, groundbreaking, revolutionary, even. It’s also a tale as old as time.

For those of you in the weeds of this topic yourselves, both Tommy and I would love additional input to flesh this out further. Let us know where there’s even more nuance and where we may mischaracterize how things are actually shaping up on the ground.

— Tommy & Nick

Sources / references

Data Center Microgrid Diesel Gensets Market - Strategic Revenue Insights

Data Center Generator Market Report - Strategic Market Research

Fuel Cells Could Help Meet the Power Demand from Data Centers - Goldman Sachs

Scaling bigger, faster, cheaper: Data centers with smarter designs - McKinsey & Company

The Rise of AI: A Reality Check on Energy and Economic Impacts - Energy Analytics

Market Snapshot: Energy storage in Canada may multiply by 2030 - Canada Energy Regulator

North America Battery Energy Storage System Market - MarketsandMarkets

Design for More Efficient Data Centers Whitepaper - Honeywell

Global data center sector to nearly double to 200GW amid AI infrastructure boom - JLL

LinkedIn Post by Stanislav Masevych on Data Centers and Renewable Energy - LinkedIn

Data centers drive surge in clean energy procurement in 2024 - S&P Global

Data centers lead global growth in corporate PPAs - pv magazine USA

Diversity of power - the biggest data center energy stories of 2024 - DataCenterDynamics

A Reality Check of Corporate Procurement Trends in 2025 - Pexapark

Power and Energy Trends Shaping the Data Center Industry - Faegre Drinker

How Data Centers Are Reshaping Global Energy Procurement - NZero

Talen Energy Expands Nuclear Energy Relationship with Amazon - Talen Energy

Amazon to help power data centers with nuclear energy - Amazon

New supply agreement expands Talen-Amazon partnership - World Nuclear News

Amazon buys nuclear-powered data center from Talen - American Nuclear Society

TerraPower in Mega Deal with Meta for Eight Natrium 345 MW Advanced Nuclear Plants - Neutron Bytes

Amazon nuclear energy deal to power data centres - Datacentre Magazine

Cleanview, “Bypassing the Grid: How Data Centers Are Building Their Own Power Plants,” 2026.

Lifset, R., Raparla, P., Stein, A., Bridges, L., McElfish, J., & Cywinski, T. (2025). Local environmental impacts of data center proliferation. Environmental Law Reporter, 55(2), 10131–10145.

Frenzel, J., Jansen, F., Liu, J., Ruddock, J., Finnegan, S., & Radloff, J. (2023, October). Digital infrastructures and environmental justice: Policies, practices, and visions. Panel presented at AoIR2023: The 24th Annual Conference of the Association of Internet Researchers, Philadelphia, PA, USA.

Mandal, R., Mondal, M. K., Banerjee, S., Chakraborty, C., & Biswas, U. (2021). A survey and critical analysis on energy generation from datacenter. In Data Deduplication Approaches (pp. 203–230). Elsevier.

Data Center Dynamics, “Amazon acquires shovel-ready 1.2GW solar and storage facility in Oregon,” 2026.

Reply